Why the AI CEOs Aren't Evil, And Why That's the Problem

The most consequential founders in artificial intelligence aren't motivated by malice. The harm they produce is structural, architectural, and far harder to name. Which is precisely why it persists.

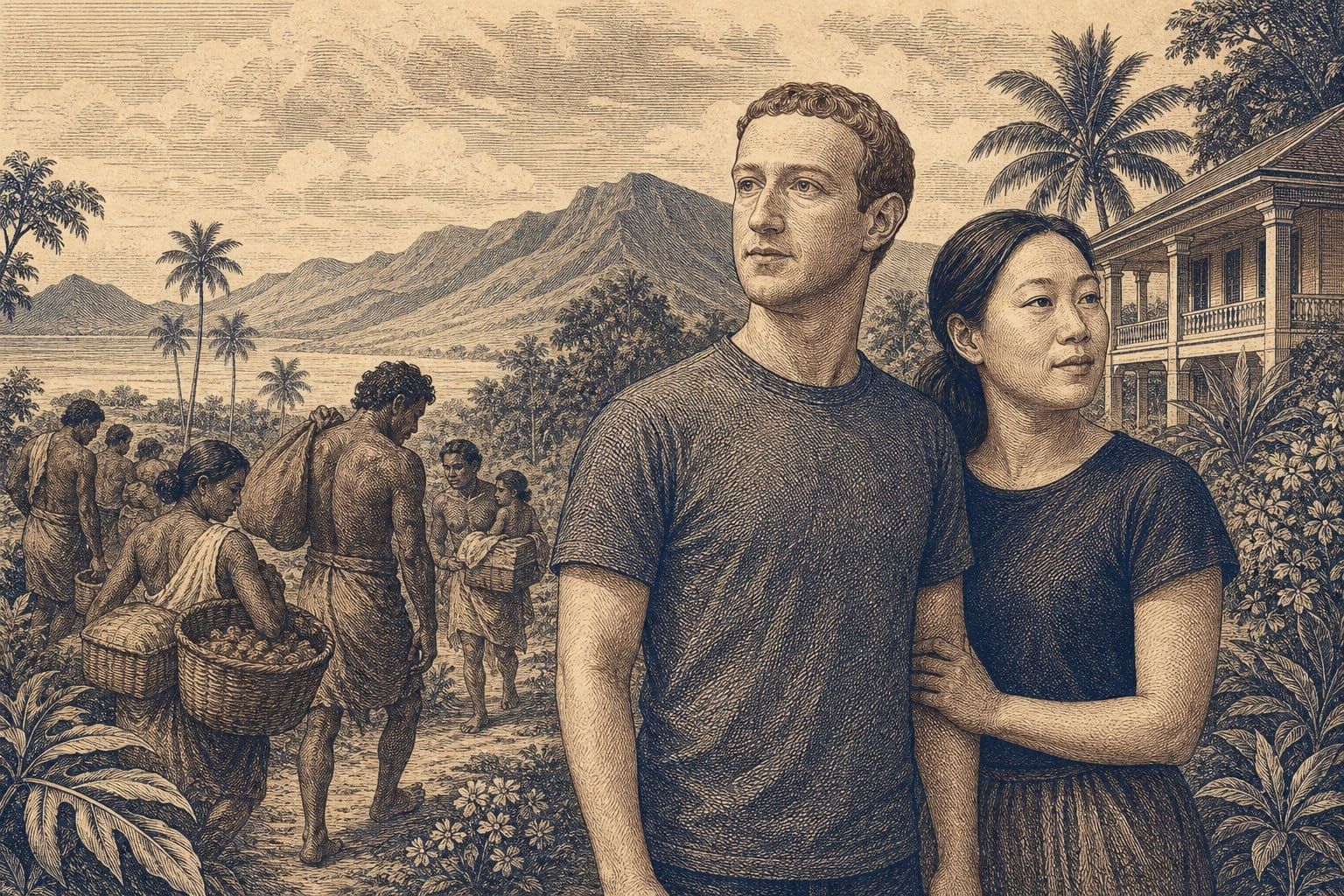

On December 28, 2016, Mark Zuckerberg published a Facebook post about the property he and Priscilla Chan had purchased on Kauai's North Shore. He described falling in love with the island's cloudy green mountains and wanting to "plant roots and join the community." Two days later, on December 30, three LLCs controlled by Zuckerberg filed eight quiet-title lawsuits in Kauai County Court against hundreds of people, many of them descendants of Native Hawaiian families whose ancestral kuleana parcels lay within his 700-acre estate. The lawsuits sought forced public auction of the land to the highest bidder, which, given the circumstances, would be Zuckerberg. Within a month, after sustained public backlash, Zuckerberg dropped the suits and published an op-ed in the local Garden Island newspaper, saying he regretted not taking the time to understand the quiet-title process and its history.

Plant roots. File lawsuits. Receive backlash. Withdraw. Express regret for a procedural misunderstanding. Move on.

If you want to understand why the standard public vocabulary for holding AI founders accountable keeps failing, study that sequence. It doesn't fit the story the public wants to tell. Zuckerberg wasn't being greedy, not in any conventional sense. He wasn't trying to evict people from homes. His lawyers were running a standard title-clearing process. The problem is that the process was optimized for property consolidation and structurally blind to the cultural, genealogical, and historical weight of what it was clearing. The cost calculus that caught the problem wasn't moral awareness. It was PR exposure. The lawsuits stopped when the reputational cost exceeded the optimization benefit. The moral substance of the kuleana claims entered the decision frame only as a cost signal, never as an input.

This pattern (locally rational action inside an optimization frame that treats affected people's interests as constraints to be removed rather than inputs to be weighted) is the defining governance failure of the AI age. And we do not yet have a public vocabulary adequate to name it.

Why the Standard Vocabulary Keeps Failing

Public accountability for tech founders has cycled through the same set of framings for a decade, and each one breaks on contact with the evidence.

The first framing is greed. They're in it for the money; they're exploiting workers and users to maximize personal extraction. Applied to the most visible AI founders, this framing runs immediately into complications. Zuckerberg and Chan have pledged 99% of their Facebook shares, currently worth something approaching $100 billion, to the Chan Zuckerberg Initiative. Sam Altman testified before the Senate in May 2023 asking Congress to regulate AI and proposing licensing requirements for powerful models. These aren't the signatures of straightforward extraction. The greed frame produces a fact-check the founder can survive, and the accountability conversation stalls.

The second framing reaches for clinical pathology: they're narcissists, sociopaths, people with a diagnosable empathy deficit. This framing demands evidence the public record doesn't supply and invites a clinical debate no journalist or commentator is equipped to adjudicate. It also mislocates the problem. Even if you could establish that any given founder met DSM-5 criteria for Narcissistic Personality Disorder or Antisocial Personality Disorder (which would require a clinical evaluation, not an op-ed) the diagnosis would not explain the structural pattern. Plenty of people meet those criteria without building systems that affect billions of users. And plenty of people who would pass any clinical screening are building exactly those systems. The problem is not in the personality; it's in the architecture.

The third framing is moral outrage without a framework: they're bad, they should feel bad, they should stop. This framing produces heat but not traction. It demands a conscience-based correction from people who are already making conscience-based decisions, just within a decision architecture that doesn't weight the right inputs. Telling an optimization-harm actor to "do the right thing" is like telling a GPS to take the scenic route. The device isn't refusing; it literally has no variable for "scenic." You have to change the map.

The Optimization Harm Distinction

What I'm proposing here, as a futurist's framing rather than a clinical diagnosis, is a distinction between two fundamentally different kinds of harm that share a surface resemblance but require entirely different accountability tools.

Greed harm is what most public criticism assumes. The actor is maximizing personal extraction at others' expense. Intent is present. The vocabulary of corporate accountability (exploitation, corruption, profiteering) was designed for this pattern and works well against it. You name the extraction, you trace the money, you apply legal or reputational consequences. The traditional toolkit is calibrated for exactly this kind of actor.

Optimization harm is structurally different. The actor is not maximizing extraction; they are optimizing toward a stated goal they sincerely believe is good. The harm comes not from intent but from architecture: the optimization function treats the affected parties' interests as constraints to be removed rather than inputs to be weighted. The actor is responsive to costs, especially reputational costs, but structurally oblivious to moral claims that have no slot in the optimization frame. Causes are taken up and then abandoned, not on their merits but because the optimization function has reweighted.

A vocabulary aid from the empathy literature helps make this more precise. The distinction between cognitive empathy(the capacity to know what another person is feeling) and affective empathy (the drive to respond to that knowledge appropriately) has been a feature of empathy research for decades, long before it was popularized in any single framework. What the optimization-harm pattern looks like, from a futurist's vantage, is not a deficit in either form of empathy. The cognitive capacity is intact. What's missing is the architectural occasion to deploy it. The founder's decision context has been carefully engineered, through layers of legal structure, capital obligation, competitive pressure, and board governance, to insulate the decision-maker from the feedback that would otherwise force perspective-taking.

This is not pathology. It is normal-range cognition operating inside a context that has been built, layer by layer, to keep moral claims from reaching the decision function. The capacity for empathy is present. The architecture never calls on it.

A critical precision here: I want to be careful not to import the deficit-centered framings of empathy that some popular psychology has applied to neurodivergent populations. The pattern I'm naming is not characterological. It's not about any group of people's inherent traits. It's structural to a decision context. Damian Milton's concept of the "double empathy problem," published in Disability & Society in 2012, is a much better reference than any deficit-centered model: Milton frames apparent empathy gaps as bidirectional failures of connection between people whose cognitive contexts differ, not as deficits inside any one party. That is precisely the architectural-asymmetry framing this piece needs. The AI founders are not incapable of perspective-taking. They operate inside structures that make perspective-taking unnecessary, and those structures can be changed.

The capacity for empathy is present. The architecture never calls on it. That is the optimization-harm signature: not cruelty, but insulation.

The Pattern in Practice

The CZI LLC. When Zuckerberg and Chan announced the Chan Zuckerberg Initiative in December 2015, they structured it as a Delaware-based limited liability company rather than a private foundation. Zuckerberg explained that the LLC offered "flexibility to execute our mission more effectively" and, to his credit, he noted the couple would receive no immediate tax benefit from the arrangement. But the structural consequences of the choice are worth cataloging. A private foundation is bound by mandatory minimum annual payouts (currently 5% of assets), IRS disclosure requirements, prohibitions on self-dealing, and restrictions on political activity. The CZI LLC is bound by none of these. Zuckerberg retains control of the Facebook shares transferred to the entity. The LLC can make political donations, lobby, invest in for-profit ventures, and change its objectives at will. Crucially, its finances are not public. As the Nonprofit Quarterlyobserved, the structure provides "no tax breaks plus no accountability."

A greed-harm actor would not bother with the philanthropic framing. An optimization-harm actor builds the philanthropy and engineers out every mechanism of external accountability. The optimization function approved the structure because it maximized flexibility for the founder. The affected parties (the public, the grantees, the communities the CZI claims to serve) have no structural role in governance. Their interests are not inputs; they are, at best, the occasion for press releases.

A fair accounting should note that CZI has made substantial real-world investments: roughly $7 billion in grants since 2015, significant contributions to biomedical research through the Chan Zuckerberg Biohub, and meaningful work on affordable housing in the Bay Area. The question is not whether good outcomes have occurred. It is whether any mechanism exists to ensure they continue, or to redirect the enterprise if they don't, when the only people with structural authority over the entity are the two people who created it.

The Kauai kuleana lawsuits. The December 2016 sequence bears repeating in the optimization-harm frame because it is almost diagrammatically clean. The quiet-title process is a standard legal mechanism for clearing title. As Zuckerberg's attorneys noted, it is the "prescribed process" in Hawaii for identifying partial owners and ensuring they receive fair compensation. Inside the optimization frame, the filing was routine: a property-consolidation action designed to produce clear title. Outside the optimization frame, in the actual lived world of the kuleana descendants, the lawsuits threatened to sever families from ancestral land that had been theirs since the Kuleana Act of 1850, using a legal mechanism that the Ka Huli Ao Center for Excellence in Native Hawaiian Law has described as a driver of Native Hawaiian dispossession.

A complication that most coverage has ignored: Carlos Andrade, a retired professor of Hawaiian Studies at the University of Hawaii and a great-grandson of Manuel Rapozo (one of the original kuleana owners), was reportedly assisting Zuckerberg's legal team as a co-plaintiff. Andrade's reasoning, as reported in the Honolulu Star-Advertiser, centered on ensuring that the land would not be lost to the county for unpaid property taxes and that his extended family would receive fair compensation rather than watching their fractional shares dilute further across generations. This complicates the simplest version of the neocolonial narrative, and that's precisely the point. The optimization-harm argument is structural, not narrative. The problem was never that Zuckerberg lacked good intentions or even that no Hawaiian stakeholder found the process reasonable. The problem was that the decision architecture treated the cultural and historical weight of kuleana claims as invisible until the PR cost made them visible. File, receive cost signal, withdraw. The moral substance entered only as reputational arithmetic.

OpenAI's structural conversions. The pattern is perhaps most legible at OpenAI, where the structural transformations have been serial and documented. OpenAI was founded in 2015 as a nonprofit with the stated mission to develop AI "in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return." In 2019, OpenAI created a capped-profit subsidiary, with returns limited to 100 times any investment, and its own operating agreement warned potential investors to "think of investments in the spirit of donations." In July 2023, OpenAI announced a Superalignment team, led by co-founder Ilya Sutskever and researcher Jan Leike, with a commitment to dedicate 20% of computing power over four years to the long-term problem of controlling superintelligent AI. By May 2024, less than a year later, both Sutskever and Leike had departed and the Superalignment team was disbanded. Leike wrote on his way out that "safety culture and processes have taken a backseat to shiny products."

In May 2023, Altman had told Congress he supported federal licensing for AI companies, a new oversight agency, and mandatory safety standards for powerful models. Senator Dick Durbin remarked that he could not recall industry leaders coming to Congress to plead for regulation. By early 2025, at a subsequent Senate hearing, Altman's posture had shifted. Asked about proposals for NIST to set AI standards, he replied, "I don't think we need it." He advocated instead for "sensible regulation that does not slow us down." Meanwhile, the structural conversions continued. In December 2024, OpenAI announced plans to restructure so that its nonprofit arm would no longer control the for-profit entity. After backlash from former employees, civil society organizations, and multiple state attorneys general, the company partially retreated, but by October 2025, the recapitalization was complete. The nonprofit now holds a 26% minority stake in a public benefit corporation. Microsoft holds 27%. The cap on investor returns has been removed. And in its 2024 IRS filing, as reported by The Conversation, OpenAI had quietly deleted the word "safely" from its mission statement.

Each of these moves was locally rational inside the optimization frame. The capped-profit was necessary to attract capital. The Superalignment team was necessary until it competed for compute resources. The call for regulation was necessary until it threatened to constrain growth. The nonprofit governance was necessary until it interfered with fundraising. The word "safely" was necessary until it became a liability. At no point does the decision-maker need to be malicious. The optimization function simply reweights, and commitments that have been reweighted to zero are allowed to fall away. The people who relied on those commitments (the safety researchers, the nonprofit donors, the public that heard a CEO testify under oath about the importance of regulation) have no structural recourse.

Why the Standard Toolkit Fails

Each tool in the standard accountability toolkit was calibrated for greed harm, and each misfires on optimization harm for the same structural reason: it assumes the actor's behavior can be changed by appealing to their conscience or penalizing their conduct. Optimization-harm actors already adjust to penalties. That's the problem.

Criminal prosecution requires demonstrable intent to harm. Optimization-harm actors can sincerely testify that they intended to benefit humanity. They often did. Ethics boards and advisory panels offer recommendations the founder is free to ignore, and at OpenAI, the board that tried to exercise actual governance power was replaced within a week. Personal moral appeals assume the decision-maker has access to the relevant moral information and chooses to ignore it; optimization-harm actors are genuinely insulated from it by layers of structure that filter out everything except cost signals. And journalism that frames the story as "founder is bad" produces exactly the fact-check dynamic that lets the founder off the hook: they can point to philanthropic commitments, Senate testimony, and stated values, and the accountability narrative collapses.

The problem is not that these founders lack conscience. It is that only certain categories of cost (legal exposure, financial risk, reputational damage) have a slot in the optimization function. Moral claims from affected parties do not. They can be heard, acknowledged, even sympathized with, and then the optimization function continues without them, because no structural mechanism requires their inclusion.

What Would Actually Work

If the harm is architectural, the intervention must be architectural. What constrains optimization harm is not conscience, not advisory boards, not pledges of future good behavior, but governance that inserts the affected parties' interests as inputs to the decision function rather than as constraints on it. Concretely, this means building structures that cannot be reweighted away.

It means stakeholder voting rights: not advisory seats, not town halls, but actual governance power for the people whose lives, labor, and data the system depends on. It means mandatory disclosure that makes the decision rationale visible to those affected: not annual transparency reports drafted by the communications team, but real-time access to the information that drives structural decisions. It means asset lock-in, legal mechanisms that prevent the charitable, safety, or public-benefit commitments from being reweighted to zero when the optimization function changes. Foundations have minimum payout requirements precisely because the donors recognized that without them, the money would eventually optimize its way out of public benefit. The CZI LLC removed that lock. OpenAI's capped-profit removed the investor-return cap. Each removal was a structural decision to eliminate a constraint that existed to protect the public interest.

The pattern is consistent: the precise structural features that would constrain optimization harm (mandatory payouts, board accountability, disclosure requirements, asset lock-in, investor caps, safety commitments) are systematically engineered out by the very organizations that most urgently need them. This is not a coincidence. It is the optimization function doing what optimization functions do: identifying constraints and removing them. The only intervention that works is governance the founder cannot engineer around.

Naming What Was Previously Invisible

In 1963, Hannah Arendt sat in a Jerusalem courtroom and watched Adolf Eichmann, the architect of the logistics of the Holocaust, present himself as a competent bureaucrat who had followed orders and optimized processes. Arendt was a political theorist, not a psychologist. She was not qualified to offer a clinical assessment of Eichmann, and her framing, "the banality of evil," was not a diagnostic claim. It was a vocabulary extension: a careful naming of a pattern of harm that the existing moral vocabulary could not capture. The standard vocabulary demanded that Eichmann be a monster. He was not. He was something worse: a person whose decision architecture had made moral reasoning unnecessary. The harm was not produced by malice but by the absence of any structural occasion for moral reflection.

Arendt's framing has been complicated over the decades. Bettina Stangneth's research on the Sassen tapes suggests Eichmann was more ideologically committed than Arendt believed, and scholars continue to debate the precise mechanism of his moral abdication. The framing endured not because it was a perfect portrait of one man but because it named a structural pattern that people recognized and that the prior vocabulary had made invisible. That is what vocabulary extensions do. They don't settle every case. They make a previously invisible category of harm available for analysis, accountability, and structural intervention.

This piece is attempting the same move at a smaller scale, for the AI age. The founders building the most consequential technology in human history are not evil. Many of them are sincere, philanthropic, and personally kind. They are also, with documentable consistency, building decision architectures that insulate them from the moral weight of the harm their systems produce, and systematically removing every structural mechanism that would force the affected parties' interests into the decision frame. Calling them evil doesn't work, because it's not true. Calling them good doesn't work, because the harm is real. What works is naming the architecture, and then changing it.

We can do that. But we need the vocabulary first.